The paper that changed everything

In June 2017, a Google research team published a paper called "Attention Is All You Need" by Vaswani and seven co-authors. The paper introduced a new neural network architecture for sequence processing, called the transformer.

The transformer outperformed everything before it on translation tasks. Within a year, transformers were dominating natural language processing. Within five years, they were the basis of GPT-3, ChatGPT, Claude, Gemini, LLaMA, and essentially every consequential modern AI system that handles language.

It's hard to overstate how consequential one paper has been. The transformer architecture is arguably the most important computing development of the late 2010s.

What it replaced

Before transformers, the dominant architectures for sequence data were recurrent neural networks (RNNs) and their fancier variants like LSTMs (long short-term memory networks).

RNNs process sequences word by word. They maintain a "hidden state" that gets updated at each step, summarizing everything seen so far. To translate a sentence, the model reads word 1, updates its state; reads word 2, updates; and so on until done; then generates the output one word at a time, again sequentially.

This worked, but had problems:

- Slow. Each step depends on the previous, so you can't parallelize. On modern hardware that loves parallel processing, this is a huge waste.

- Long-range dependencies fade. By the time the network has processed a long sentence, the early words' influence is diluted. RNNs struggled with sentences requiring distant context.

- Hard to train. Vanishing gradients made deep RNNs flaky.

LSTMs fixed some of this but didn't solve the fundamental issue: sequential processing is slow, and information has to travel through many steps.

The transformer's key idea

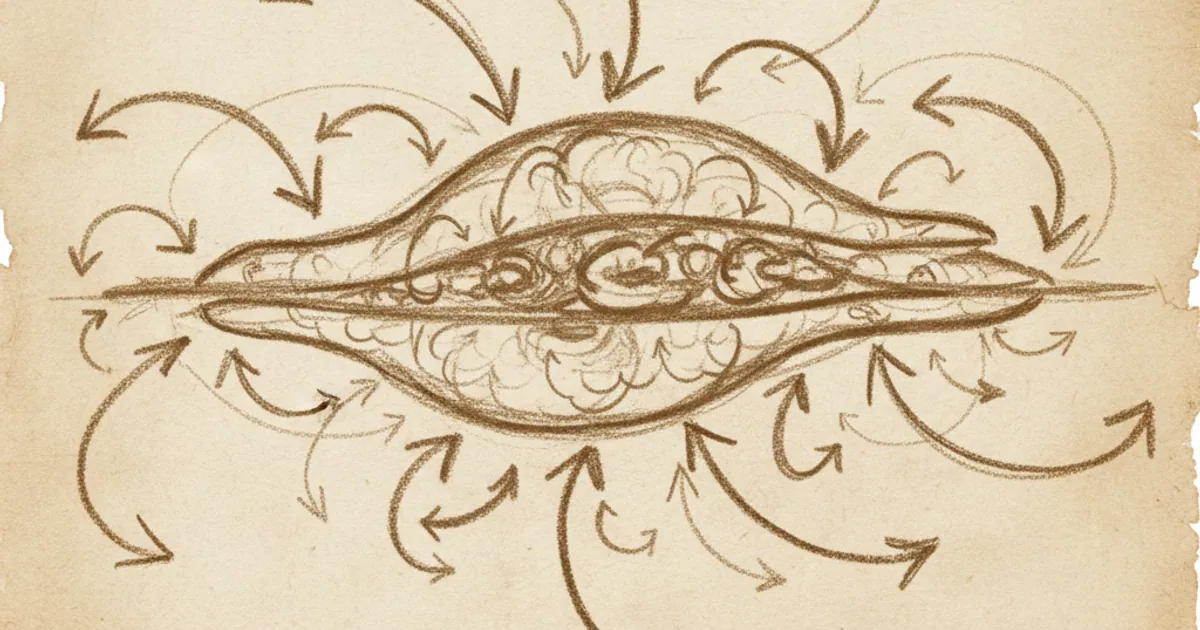

The transformer replaces sequential processing with attention. Instead of building up information step by step, every position in the sequence can directly look at every other position to decide what's relevant.

The central mechanism is called self-attention. For each word (or token) in the sequence, the model:

- Asks: "what other words should I be paying attention to?"

- Computes an attention score for every other position.

- Combines all positions, weighted by attention scores, to produce its updated representation.

That's it. Each word's new representation is a weighted blend of every other word's information, with the weights determined by relevance.

Three crucial properties:

- Parallel. All positions are processed simultaneously, since each just looks at the rest. This is way faster on GPUs than RNNs' sequential approach.

- Long-range. Any word can directly attend to any other, regardless of distance. No information loss with sequence length.

- Scalable. The architecture works better with more layers, more parameters, and more data — a property that holds far beyond what previous architectures could exploit.

How attention actually works

A bit more detail. Each word's embedding (see the embeddings article) is projected into three vectors:

- Query (Q). "What am I looking for?"

- Key (K). "What can I be matched against?"

- Value (V). "What information do I carry?"

The attention computation for a given word:

- Take its query Q.

- Compute the dot product of Q with every other word's key K. This gives an attention score.

- Apply softmax to turn the scores into probabilities (summing to 1).

- Multiply each other word's value V by its probability and sum them all up.

The result is the word's "attended" representation — a blend of values weighted by relevance.

Stacked attention layers (often with multiple "heads" computing attention in parallel ways) let the network build up increasingly complex understanding of the sequence.

If this sounds abstract, the punchline is: every word can directly query every other word, and the weights of the blend are learned. The model figures out for each context what relationships matter.

Encoder vs decoder vs both

The original transformer had two halves: an encoder (processes the input) and a decoder (generates the output). This made sense for translation: encode English, decode French.

Three variants have emerged:

Encoder-only (BERT, RoBERTa, modern embedding models). Best for understanding tasks — classification, similarity, embeddings. The model produces a representation of the input; it doesn't generate.

Decoder-only (GPT, Claude, LLaMA, Gemini's main models). Best for generation tasks. The model predicts the next token given the previous ones, autoregressive style. Most "chat" LLMs are decoder-only.

Encoder-decoder (T5, original transformer, BART). Best for tasks that map one sequence to another — translation, summarization, question answering with specific inputs.

Decoder-only has dominated the LLM space because it's flexible — you can frame most language tasks as "given this prompt, generate continuation." Train a big decoder on enough text, and it can do almost anything.

Why they scale so well

The transformer architecture has a remarkable property: performance keeps improving as you make it bigger.

GPT-1 (2018, 117 million parameters) was promising but limited. GPT-2 (2019, 1.5 billion) was startlingly good. GPT-3 (2020, 175 billion) was a paradigm shift. GPT-4 and modern models (2023-2025, hundreds of billions to trillions) opened entirely new use cases.

Each generation, just by scaling up, the model gained capabilities that previous generations didn't have — emergent behaviour like in-context learning, few-shot reasoning, code generation, complex instruction following.

This scaling is a transformer-specific phenomenon to a degree. Earlier architectures didn't show such reliable scaling. The transformer's combination of attention (good inductive bias for sequence structure) and parallelism (efficient on GPU) means more data and more compute keep paying off.

How far this continues is the central open question in AI today. Models may already be hitting diminishing returns; they may not. Investing tens of billions of dollars in larger training runs depends on the answer.

Beyond text

Originally invented for language, the transformer architecture has spread far:

Vision Transformers (ViT). Treat images as sequences of patches. Each patch is like a "word." Attention works the same way. ViTs match or beat convolutional networks on most image tasks now.

Protein Folding. AlphaFold2 (2020), which solved the 50-year-old protein folding problem, is transformer-based. Sequences of amino acids → 3D structure.

Audio. Speech recognition, music generation, voice synthesis — all increasingly transformer-based.

Decision Transformers. Reinforcement learning reframed as sequence prediction.

Robotics. Action sequences predicted by transformers given history of states.

In retrospect, the transformer wasn't really about language. It was about handling sequences with attention, and almost everything in machine learning involves sequences in some form.

What transformers can't (easily) do

Some limitations:

Quadratic cost in sequence length. Naive attention computes interactions between every pair of tokens, so cost scales as length². Long contexts (100K+ tokens) are expensive. Various optimizations (Flash Attention, sparse attention, sliding window) help but the fundamental issue remains.

Memory. Each forward pass requires holding attention computations in memory. Long sequences require huge memory.

Compositional reasoning. Transformers pattern-match better than they reason from first principles. They can fail at problems requiring careful step-by-step logic, especially with unusual structure.

Continuous learning. Once a transformer is trained, updating it for new information is expensive. There's no easy "tell it a new fact" mechanism without retraining or external augmentation.

These are active research areas. New architectures (state-space models like Mamba, mixture-of-experts variants, transformer hybrids) are being explored. Whether they'll replace transformers or just augment them remains to be seen.

Want a guided 5-minute course on transformers and how they power modern AI? NerdSip can generate one with quizzes.

The takeaway

A transformer is a neural network built around the attention mechanism, which lets every position in a sequence directly look at every other position. The architecture is parallel (fast on GPUs), handles long-range relationships (because attention bypasses sequence distance), and scales beautifully (bigger models keep improving). Since the 2017 paper that introduced it, transformers have replaced essentially all alternative architectures for language and have spread into vision, protein folding, audio, and more. Every consequential AI system you interact with today — ChatGPT, Claude, Gemini, image generators, code assistants — is built on transformers. Knowing the architecture is roughly knowing what modern AI does.