Subject

How modern AI systems actually work — neural networks, embeddings, transformers, and why language models still hallucinate.

Traditional programming: you write rules. For each input, you specify exactly what output the program should produce. This works great for tasks where the rules are clear — calculating a square root, sorting a list, processing a payment.

But what about tasks where the rules aren't clear? "Recognize whether this photo contains a cat." "Translate this sentence to French." "Predict whether this customer will churn." There's no clean rule-set for any of these. Decades of trying to hand-write the rules produced mediocre results.

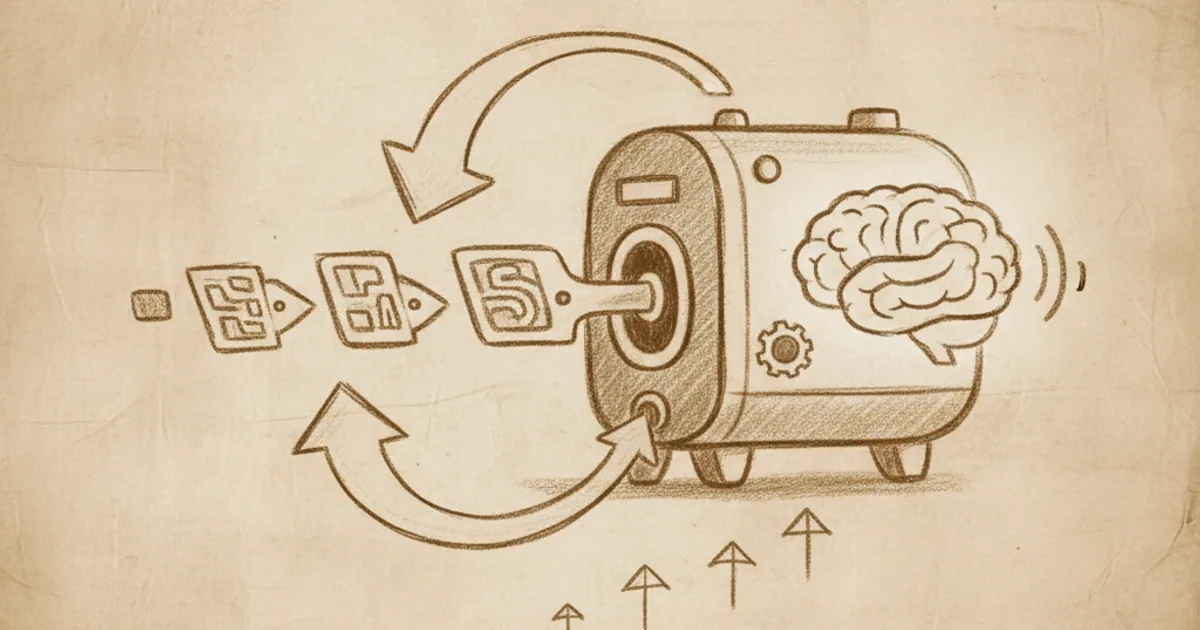

Machine learning is the alternative approach: instead of writing rules, you write a learning algorithm, feed it many examples of inputs and desired outputs, and let the algorithm adjust its own internal parameters until the inputs map to the right outputs.

The result is a program whose behaviour you didn't specify directly — you specified it indirectly, through the data.

Almost all modern ML follows a recipe:

The trained model is a function: input → output. You can now apply it to new inputs (inference) and get plausible outputs.

The art is in: what model structure, what training data, what loss function. Most ML research is about finding better answers to these questions.

Supervised learning. Your training data has labels — for each input, the correct output is given. The model learns to predict the label.

Example: training images of cats and dogs, each labeled "cat" or "dog." The model learns to output the right label for new images.

Most practical ML is supervised. Spam filters, fraud detection, language translation, image classification, voice recognition — all supervised.

Unsupervised learning. Your training data has no labels. The model has to find structure on its own.

Examples: clustering customers into groups based on purchase patterns; finding anomalies in network traffic; learning compressed representations (embeddings — see the embeddings article).

Reinforcement learning. The model learns by trial and error, receiving rewards or penalties for actions taken in an environment.

Examples: AlphaGo learning to play Go (reward for winning); robots learning to walk (reward for going forward); large language models being fine-tuned with human feedback (reward for outputs humans rate well).

These three categories blur in practice. Modern systems often combine them: a language model is pre-trained unsupervised (predicting the next word in a huge corpus), then fine-tuned with supervised learning (matching specific instruction-following examples), then refined with reinforcement learning from human feedback.

A key fact: ML models often get better when you give them more data, more parameters, and more compute. This scaling has been the dominant force in AI for the past 15 years.

GPT-3 (175 billion parameters, 2020) was vastly more capable than GPT-2 (1.5 billion, 2019), and not because the architecture changed much — mostly because it was bigger and trained on more data. Each new generation of large language models pushes this further.

The scaling pattern isn't magical. It's a specific empirical regularity: across many tasks, model performance improves predictably with compute and data, often following power laws. This is what allows companies to bet large amounts of capital on training even larger models — they have rough expectations of how much smarter the result will be.

Whether the scaling continues, and how far, is one of the most consequential open questions in the field.

ML works astonishingly well in many cases. It also breaks in characteristic ways:

Data bias. The model learns whatever pattern is in the training data, including biases the humans who collected it didn't notice. Facial recognition trained mostly on lighter skin works less well on darker skin. Hiring algorithms trained on past hiring decisions reproduce past hiring biases. The model is faithfully reflecting the data — but the data wasn't fair.

Distribution shift. The model works on inputs that look like the training data, but fails on inputs that look different. A self-driving car trained on California sunshine fails in Boston snow.

Adversarial examples. Deliberately-crafted inputs can fool models that handle real inputs well. A few pixels changed in an image can flip a confident classification. This matters for security-critical applications.

Optimization for the wrong thing. Loss functions are proxies for what you actually care about. Models learn to minimize the loss, even if that doesn't quite match the real goal. ML systems for engagement on social media optimize for clicks and time-on-site, which doesn't always equal user wellbeing.

Hallucination. Especially in language models, the model produces confident-sounding outputs that aren't true. The model doesn't know it's wrong; it just generates statistically-plausible text. Covered in the hallucination article.

These aren't bugs — they're the expected consequences of how ML works. Engineering systems to handle them is most of the practical work in ML deployment.

Even if you'll never train a model, knowing what ML does and doesn't do helps you read claims about AI more accurately.

Understanding the recipe — collect data, define loss, optimize parameters — is a kind of bullshit detector. It lets you ask the right follow-up questions when someone is selling AI capabilities.

Want a guided 5-minute course on machine learning, neural networks, or any specific AI topic? NerdSip can generate one tuned to wherever you want to start.

Machine learning is programming by example. You define a model with adjustable parameters, give it many examples of inputs and desired outputs, and let an optimization algorithm tune the parameters until the model handles the examples well. It works for problems where the rules are too complex to write by hand — recognizing images, translating language, predicting behaviour. It fails in predictable ways: biased data, distribution shift, adversarial inputs, optimizing for the wrong thing. The other articles in this cluster zoom in on the specific architectures and behaviours: neural networks, embeddings, transformers, and why language models hallucinate.

A short editorial reading list. Pick whichever fits how you like to learn.