The shortest possible answer

Entropy is a number that counts how many invisible arrangements look the same from the outside.

A messy room can be re-messed in a trillion different ways and you'd still call it messy. A tidy room only counts as tidy if everything is in its specific spot. There are vastly more "messy" arrangements than "tidy" ones, so when things shuffle randomly, they almost always shuffle toward "messy".

That's it. That's entropy.

The sugar cube experiment

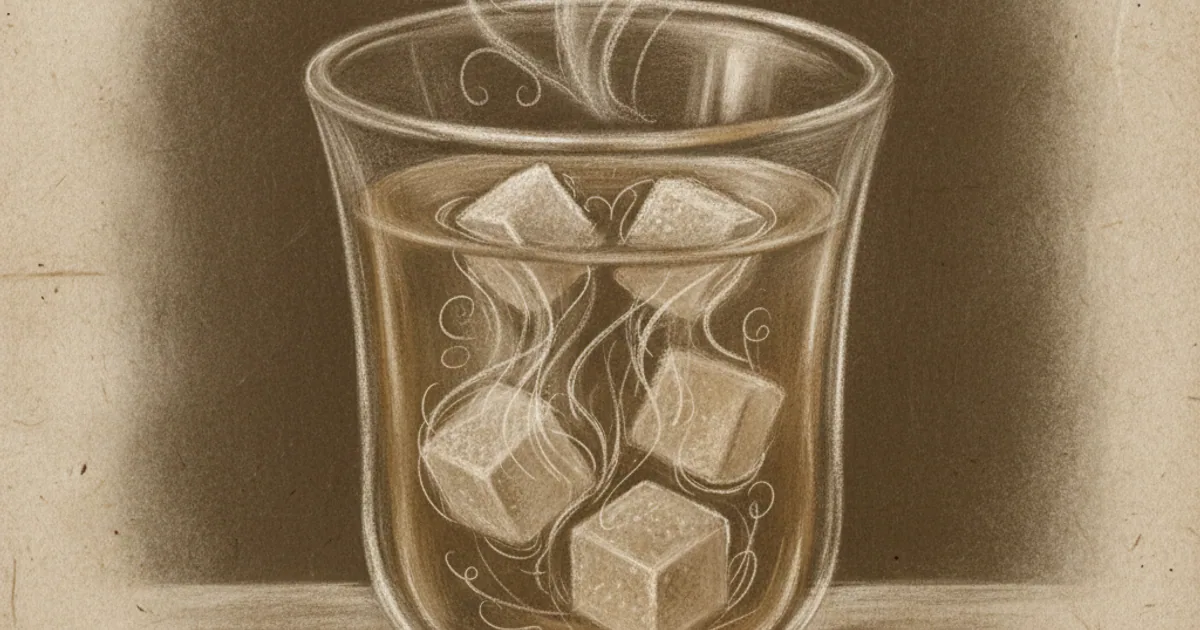

Drop a sugar cube into a glass of warm water. It dissolves. It never re-forms into a cube.

This is not because anti-cube energy is pulling the sugar apart. It's because:

- One specific arrangement of sugar molecules looks like a cube.

- Many trillions of arrangements look like "dissolved sugar".

Random thermal motion shuffles the molecules constantly. Each shuffle is equally likely to land in any arrangement. But because dissolved arrangements vastly outnumber cube arrangements, the system almost always ends up dissolved. The "law" of increasing entropy is really just statistics.

Why this is the second law

The second law of thermodynamics says: in a closed system, total entropy never decreases.

People often state this as "the universe is becoming more disordered". That's fine as poetry. Mathematically, it's the inevitable consequence of random shuffling in a system with many possible states.

It's the law that gives the universe an arrow of time. Eggs scramble; they don't unscramble. Coffee cools; it doesn't reheat itself. Movies played backward look wrong because they show entropy decreasing.

"But my room can get tidy"

Yes — because you put work in. You spent energy organizing things, and that energy came from food, which came from the sun, and so on. Local entropy can decrease as long as total entropy increases somewhere else. Your tidying releases heat (your body's metabolism), and that heat raises the total entropy of the room by more than your tidying lowers it.

There's no free lunch. Every refrigerator, every air conditioner, every living cell, is a local entropy-decreaser that pays for itself by raising entropy somewhere else.

The heat death of the universe

If entropy can only go up, what's the end state? Eventually, all energy is spread out so evenly that no useful work can happen. No temperature differences means no engines, no life, no anything. This is the heat death.

It's a long way off — many, many times the current age of the universe — but it's where the math points.

What entropy is not

- Not "messiness" as a feeling. It's a precise count.

- Not a force. Nothing pushes things toward higher entropy. It's a statistical inevitability.

- Not the same as randomness. Random sequences can have low entropy if there's structure; structured ones can have high entropy if you measure them wrong.

The one-line definition

Entropy is the log of the number of microstates consistent with a macrostate. Or: how many ways the small stuff can be arranged without changing the big picture.

That's the version Boltzmann had carved on his tombstone.